For the block “Teaching With Data”, I have chosen to visualize the number of comments I made in 10 academic papers read in the past year, chosen because those ten were the most easily found. The idea came to me when thinking about the paper by Brown (2020) where they talk about how “A homework assignment started in the cloud registers trace data about each interaction between students and the tool. A homework handout leaves with a student and only returns when it is ready to be submitted”(p.12).

I’ve debated a lot between reading papers physically and reading them digitally, and in the end the convenience of being able to search through comments and text digitally won out. However, if I was being assessed based on my digital interactions with these papers, what would that look like? What would my interactions say about how much I had “learnt”? When I set out to create the visualizations, I kept thinking of ways to set the data out in a way that “made sense”. When I thought more about what I was actually doing when “making sense” of the data, I realized I was finding the best way to perform a comparison. In that, only by presenting the data in a way where the contrasts between the data was easily captured and compared, did it make “most sense”. Out of interest to see what it might look like to visualize for ease of comparison, versus not, I created two visualization; both capturing the same data, visualized in different ways.

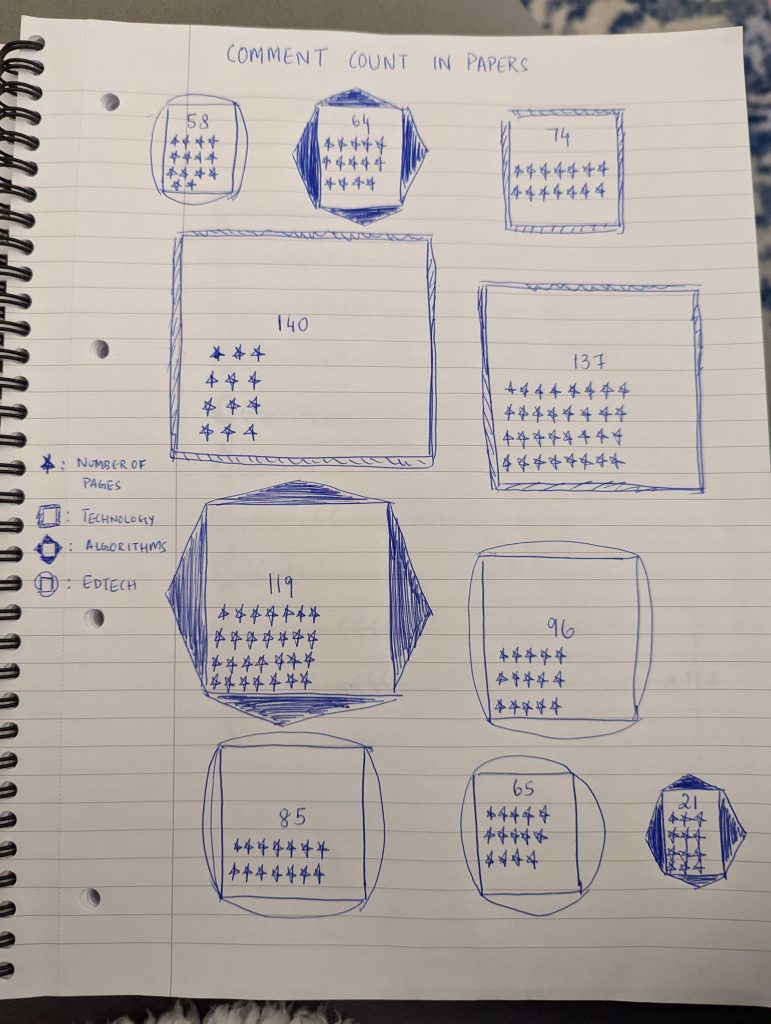

My first visualization anchors around size differences between the squares, where each square represents a paper, and the number of comments in the paper is noted in the square. The larger the number of comments on a paper, the larger the square. The stars within the square represent the number of pages in the paper. I tried to randomize where the squares went so it isn’t very easy when you look at the visualization to compare and draw inferences from the data. Though it still seems like the bigger squares cluster in the middle, with the smaller numbers on the outside.

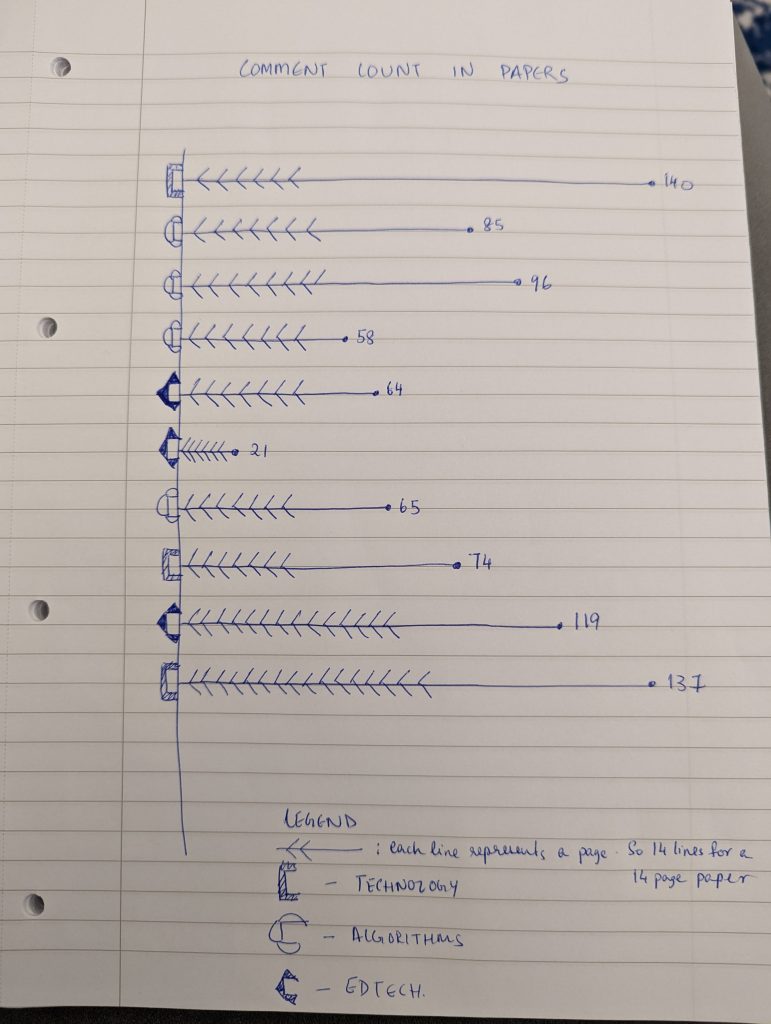

In my second visualization, I created a more streamlined way to see the data. The line length represent the comment count. It’s easy to see which paper had more comments. The herringbone pattern on each horizontal line represents the number of pages, making it easy to also quickly see which papers were longer (and quickly assess – did longer papers have more comments?). I also added some patterns around each visual of a paper to depict the broad topic of the reading — Technology, EdTech, Algorithms. These topics all have significant overlap but I created this also as a way of distinguishing between the papers (again, a conscious choice that does not really make sense, except to create a distinction).

Going back to my initial question, if a teacher had access to this data, what would my digital “traces” say about me as a student? A very easy (and naive) correlation that would be easy to code into a teacher dashboard is to say more comments = more engagement = better learning. But what if I commented more because I did not understand a lot of the paper, and added comments against each line I did not follow? Or perhaps the assumption is right — I deeply engaged with the paper, and had so many thoughts on it I flooded the paper with comments. How would an algorithm know the difference? You could parse the comments now for question marks to know whether my comments are questions, or statements(assuming I, in fact, use good punctuation in my comments). This is also a very easy system to game. If I knew I was being appreciated for more number of comments, it’s quite easy for me to leave “this is great!”, or “this is so important” all over each paper. Not that those are bad, what makes them problematic in this scenario is the inferences drawn from them. There could have also been a day when I was a reading a paper, took a lot away from it, but my hand hurt and I didn’t have my Apple pencil with me and so I didn’t comment in the paper at all. What then? Correlation, causation, all muddled together in one big puddle of digital traces.

In my own visualization, I used only the data that was readily available to measure — comments, number of pages, the topic of the paper. I had no way to capture the conversations the papers initiated, or how an idea formed in part due to these papers I read. They cannot be measured. I’m sure the point of AI in edtech is to precisely go after these data points. To analyze conversations, to view facial expressions, as a way of filling in the “gaps”. But, these will always still fall short, while creating a host of other problems along the way. As a teacher, it is inevitable that when presented with certain types of data on students, their attention is drawn precisely to those data points (such as number of comments or highlights), attention that may have focused elsewhere without these metrics presented to them. Williamson, Bayne & Shay (2020) summarize the phenomena perfectly: “Data and metrics set limits on what can be known and what can be knowable. They define what is rendered visible or left invisible, thereby impacting on how certain practices, objects, behaviours and so on gain value, while others are not measured or valued.” (p.3).

REFERENCES

Brown, M. (2020). Seeing students at scale: how faculty in large lecture courses act upon learning analytics dashboard data. Teaching in Higher Education, 25(4), 384–400. https://doi.org/10.1080/13562517.2019.1698540

Williamson, B., Bayne, S., & Shay, S. (2020). The datafication of teaching in Higher Education: critical issues and perspectives. In Teaching in Higher Education (Vol. 25, Issue 4, pp. 351–365). Routledge. https://doi.org/10.1080/13562517.2020.1748811

2 responses to “Data Visualization: Comment count in papers”

Thanks for sharing, I really liked the questions you were asking around quantity and quality of the comments that you made in the documents. As you say the quantity does not necessarily reflect a ‘quality’ comment but depending on how Edtech is programmed it could easily consider this as ‘engagement’.

Even as AI progresses it will be interesting to see how interpretations of l learner engagement change over time. Will each ‘innovation’ in Edtech be met with new ways that learners can ‘game’ the system?

Hi Meenakshi,

I really like your idea here about capturing comments on papers. I dread to think how many comments I make when reading, as I tend to capture as much as I can to refer back to, then often go back through the notes and highlight what I believe are the most important points.

Your point about whether to capture notes in digital form vs analogue in something I always deliberate over. I love the idea of making annotated notes over a paper, but – like you point out – I usually opt for digital notes so I can easily search my notes afterwards.

I think this following observation is a really good point:

“As a teacher, it is inevitable that when presented with certain types of data on students, their attention is drawn precisely to those data points (such as number of comments or highlights), attention that may have focused elsewhere without these metrics presented to them”

I think the fact that this data is available and presented in an often simplified way, makes it far too easy to refer to and rely upon. This is dangerous, as even though data dashboards usually only offer a proxy of learning, this is rarely highlighted, or not made as prominent as it should be. I think this brings up the issue of acting responsibly and ethically on the part of EdTech companies that offer these solutions.